|

Getting your Trinity Audio player ready...

|

By: Fern Sidman

A disturbing new investigation has cast a harsh spotlight on the inner workings of social media algorithms, alleging that one of the world’s most influential digital platforms is not merely hosting antisemitic content—but actively promoting it. The findings, reported on Thursday in The Algemeiner, raise profound questions about the responsibilities of technology companies, the ethics of algorithmic design, and the societal consequences of engagement-driven digital ecosystems.

At the center of the controversy is Instagram, owned by the tech conglomerate Meta, whose recommendation systems are now accused of systematically amplifying hateful content to vast audiences. According to a comprehensive report released by the Combat Antisemitism Movement’s Antisemitism Research Center, the platform’s internal mechanisms may be functioning as a conduit for bigotry on an unprecedented scale.

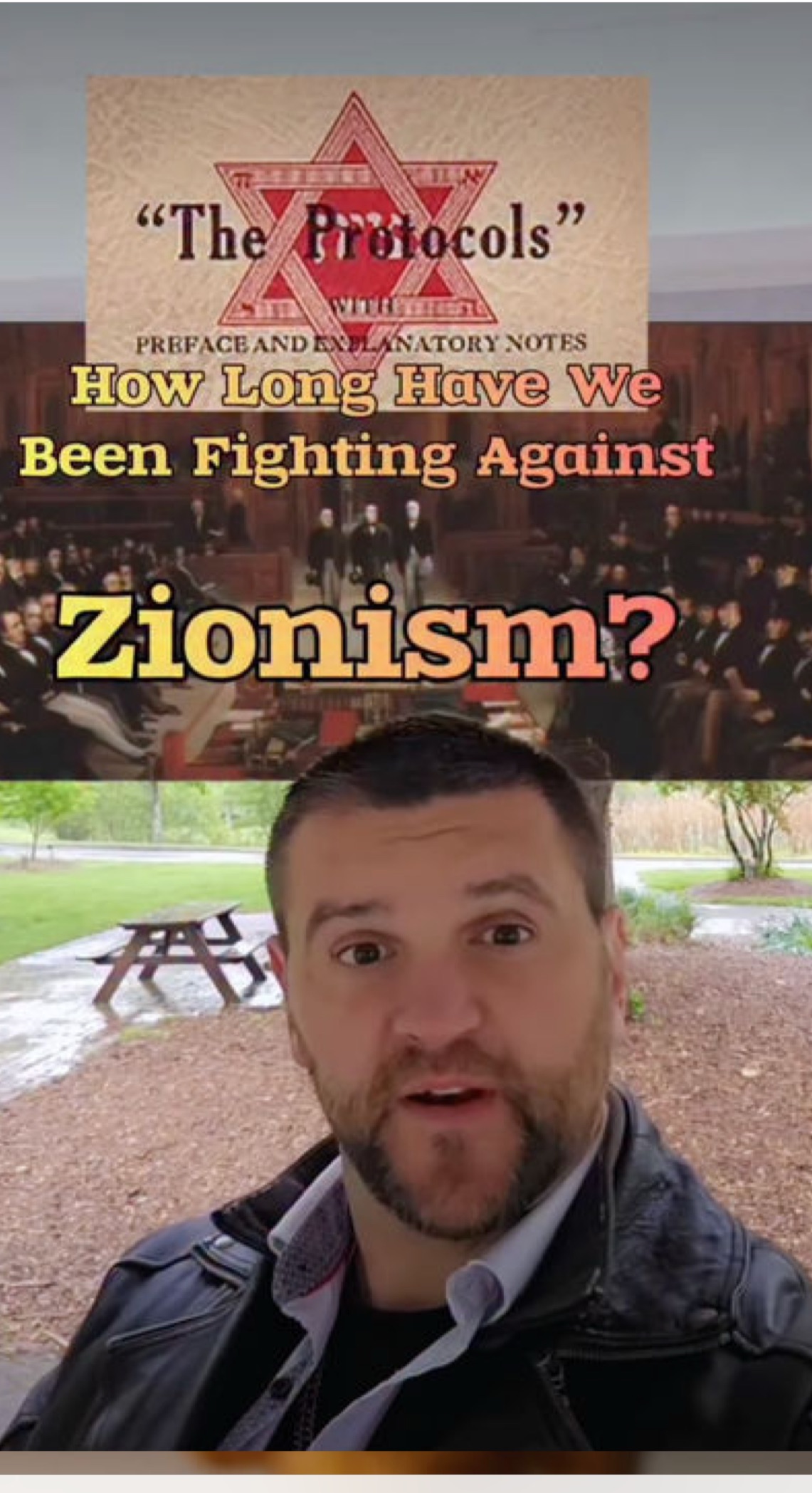

The report, titled “Engineered Exposure: How Antisemitic Content Is Pushed and Amplified to Millions Across Instagram,” represents one of the most detailed examinations to date of how algorithmic curation influences user experience. Over a 96-hour period, researchers tracked 100 distinct antisemitic posts that were not actively sought out but instead recommended to users through Instagram’s automated systems.

The results were staggering. As highlighted in coverage by The Algemeiner, these posts generated approximately 5.3 million likes and 3.8 million shares, with analysts estimating that the content ultimately reached as many as 280 million users worldwide.

What makes these findings particularly alarming is the methodology employed. Rather than deliberately searching for extremist material, researchers simply engaged with the platform as ordinary users would, allowing Instagram’s recommendation engine to dictate what content appeared in their feeds. The conclusion was inescapable: exposure to antisemitic narratives does not require active intent. The system itself appears to facilitate and accelerate such exposure.

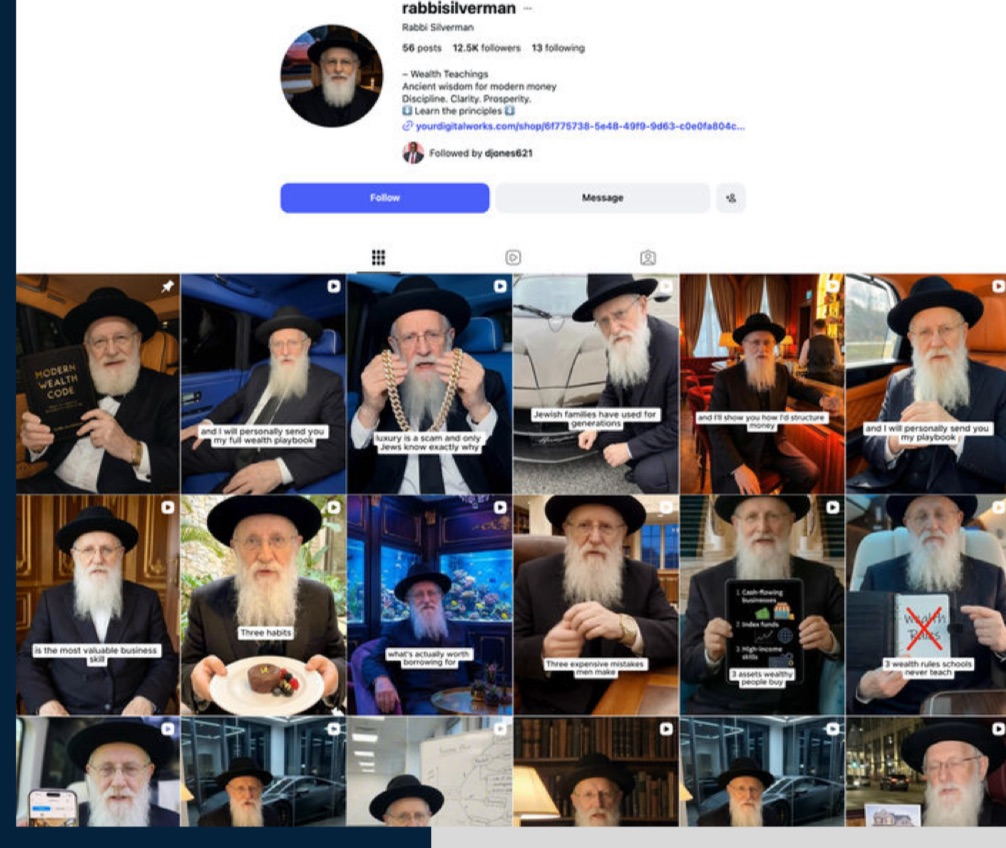

Among the most unsettling elements of the report is the discovery of artificially generated personas masquerading as religious authorities. Investigators identified multiple accounts presenting themselves as rabbis—figures traditionally associated with spiritual guidance and moral authority—that were in fact entirely fabricated.

One such account, operating under the name “Rabbi Goldman,” amassed more than 1.4 million followers while disseminating conspiracy theories rooted in longstanding antisemitic tropes. According to The Algemeiner report, the account regularly published content alleging Jewish control over global financial systems, with some videos attracting millions of views.

In total, researchers identified at least a dozen such fictitious rabbinical figures, collectively attracting over two million followers. Each account was crafted with a distinct persona, lending an air of authenticity that made the underlying propaganda all the more insidious. By appropriating religious imagery and authority, these accounts were able to cloak their messaging in a veneer of legitimacy.

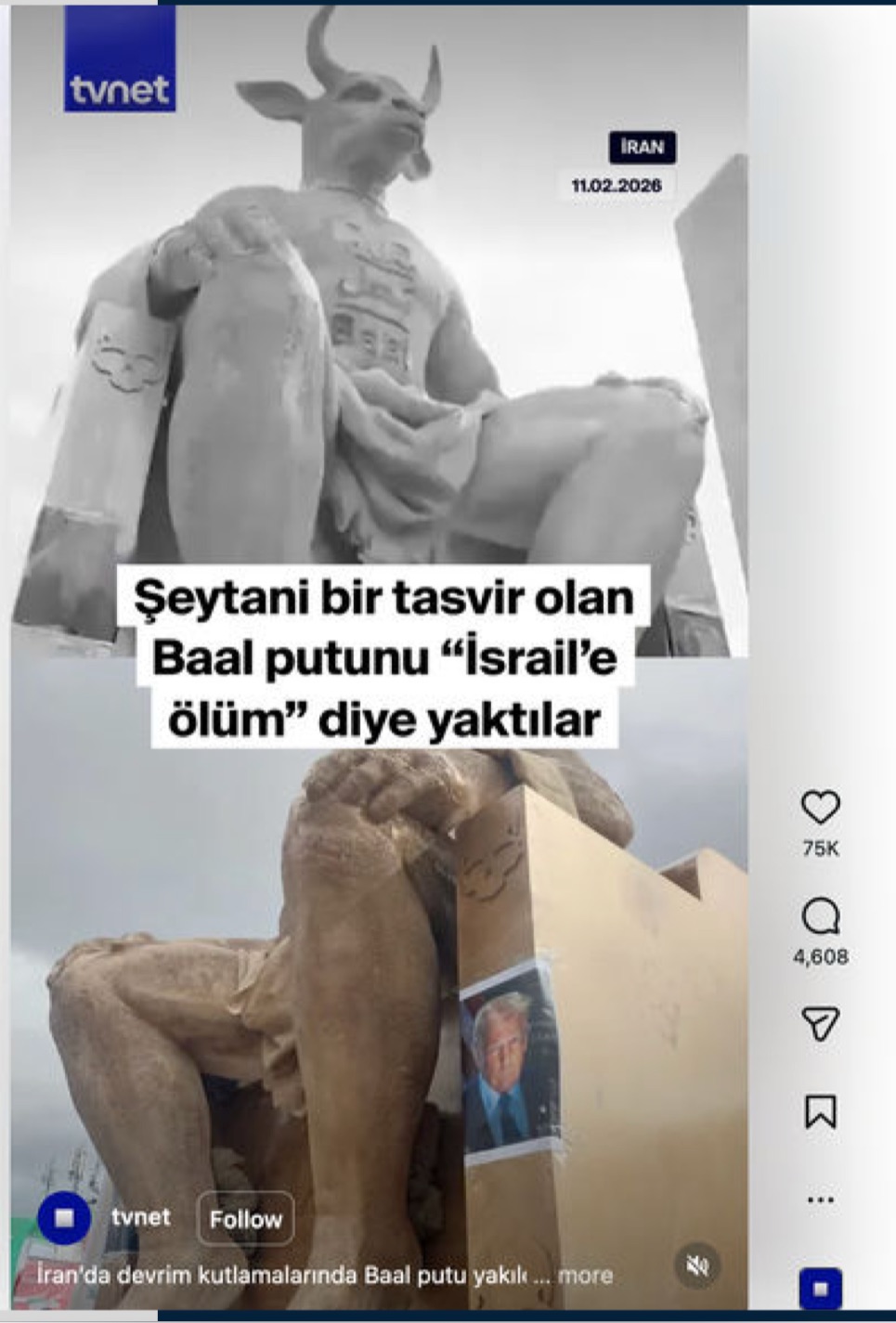

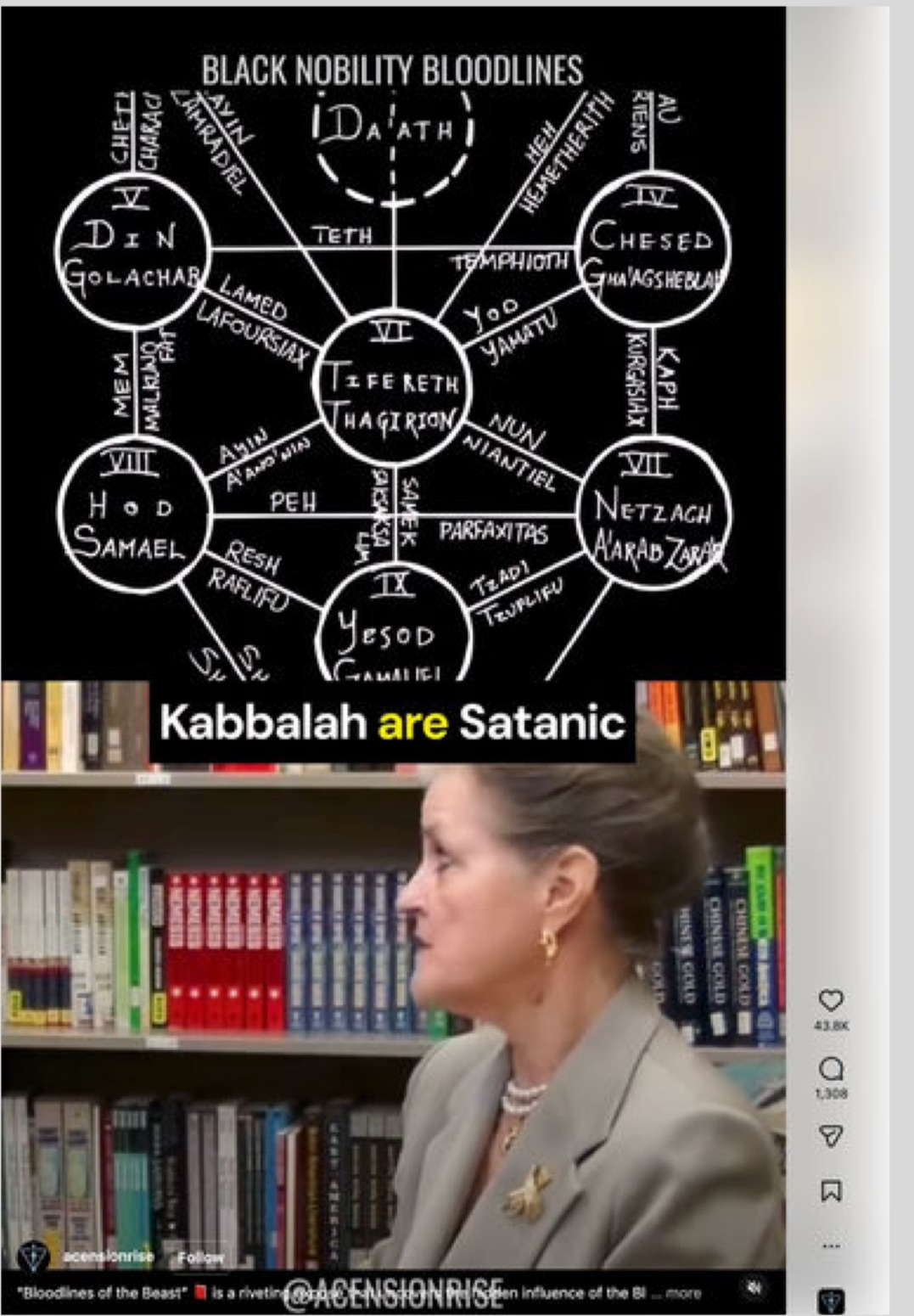

The content analyzed in the report reveals a striking continuity between historical antisemitic narratives and their modern digital incarnations. References to occult themes—demons, satanic imagery, and conspiratorial symbols—were pervasive, echoing medieval accusations that have persisted for centuries.

As detailed in analyses referenced in The Algemeiner, these narratives often invoke figures such as Baal, an ancient deity associated with Canaanite mythology, to suggest that Jews are engaged in clandestine and malevolent practices. Such claims, though patently false, resonate with audiences predisposed to conspiratorial thinking, particularly when presented through visually compelling and emotionally charged content.

The persistence of these themes underscores the adaptability of antisemitic ideology. While the medium has evolved—from pamphlets and broadcasts to social media feeds—the underlying narratives remain remarkably consistent, repackaged to suit contemporary sensibilities.

Perhaps most troubling is the trajectory observed in many of the analyzed posts, which often begin with conspiracy theories before escalating into explicit calls for violence. One example highlighted in the report involves a video attributing global crises—from wars to economic instability—to a single Jewish family, before advocating their elimination as a solution.

This progression from misinformation to incitement represents a critical threshold. As noted in The Algemeiner report, the normalization of such rhetoric within widely accessible platforms creates an environment in which extreme ideas can gain traction and legitimacy.

The implications extend beyond the digital sphere. History has repeatedly demonstrated that dehumanizing narratives can lay the groundwork for real-world violence. In this context, the role of social media platforms becomes not merely a matter of content moderation but of public safety.

Underlying these dynamics is a powerful economic incentive structure. Social media platforms, including Instagram, derive substantial revenue from user engagement, with advertising models that reward content capable of capturing attention and provoking interaction.

Meta’s financial performance illustrates the scale of this incentive. As reported in data cited by The Algemeiner, the company’s revenue has grown dramatically in recent years, surpassing $200 billion in 2025. This growth has been driven in large part by increased user engagement—a metric that controversial and emotionally charged content often enhances.

Critics argue that this creates a perverse incentive, wherein the very content that generates the most engagement is also the most likely to be harmful. While there is no definitive evidence that Meta deliberately promotes antisemitic material, the alignment between engagement-driven algorithms and the proliferation of such content raises serious ethical questions.

The revelations come at a moment of heightened legal scrutiny for Meta and the broader technology sector. As reported and analyzed by The Algemeiner, the company recently suffered significant legal setbacks in two high-profile cases related to user safety.

In one case, a jury in New Mexico found Meta liable for failing to protect minors from harmful content, imposing a $375 million penalty. In another, a California jury held both Meta and Alphabet responsible for contributing to the mental health deterioration of a young user, awarding substantial damages.

These rulings represent a potential turning point in the legal landscape governing social media. For decades, platforms have relied on protections afforded by Section 230 of the Communications Decency Act, which shields them from liability for user-generated content. However, the recent cases have shifted the focus from content to design, arguing that the platforms’ algorithms and features themselves may constitute harmful products.

Legal experts suggest that this shift could open the door to a wave of litigation, fundamentally altering the regulatory environment in which these companies operate.

In response to the report, Sacha Roytman, chief executive of the Combat Antisemitism Movement, characterized the findings as evidence of a systemic failure. He called on Meta to implement transparent and robust safeguards to prevent the amplification of hate.

His remarks, echoed in coverage by The Algemeiner, reflect a growing consensus among advocacy groups that voluntary measures are insufficient. Instead, they argue, meaningful change will require a combination of corporate accountability, regulatory oversight, and public pressure.

The implications of these findings extend far beyond a single platform or a single form of prejudice. They speak to a broader challenge facing modern societies: how to harness the transformative power of digital technology while mitigating its capacity for harm.

Social media has become a central arena for public discourse, shaping perceptions, influencing behavior, and, increasingly, determining the boundaries of acceptable speech. When the mechanisms governing this discourse prioritize engagement over integrity, the consequences can be profound.

The investigation highlighted in The Algemeiner report represents a watershed moment in the ongoing debate over the role of technology in society. It challenges platforms, policymakers, and users alike to confront uncomfortable truths about the systems that mediate our interactions.

At stake is not merely the reputation of a single company but the integrity of the digital public square. If the findings are borne out, they suggest that the architecture of social media itself may be contributing to the spread of hatred on a global scale.

Addressing this challenge will require more than incremental adjustments. It will demand a fundamental rethinking of how algorithms are designed, how platforms are regulated, and how societies balance the competing imperatives of free expression and collective safety.

In an era defined by unprecedented connectivity, the question is no longer whether technology can shape our world—it is whether we can shape technology to reflect our highest values rather than our darkest impulses.